K8s二进制方式搭建集群

1.K8S相关地址

1.1.github地址

https://github.com/kubernetes/kubernetes

1.2.官网地址

https://kubernetes.io/zh-cn/

2.下载Kubernetes二进制软件包

2.1.下载地址:

https://dl.k8s.io/v1.31.3/kubernetes-server-linux-amd64.tar.gz

2.node-exporter41节点免密钥登录集群并同步数据

2.1 设置相应的主机名及hosts文件解析

[root@node-exporter41 ~]# cat >> /etc/hosts <<'EOF'

10.0.0.240 apiserver-lb

10.0.0.41 node-ex01

10.0.0.42 node-ex02

10.0.0.43 node-ex03

EOF

2.2 配置免密码登录其他节点

[root@node-exporter41 ~]# cat > password_free_login.sh <<'EOF'

#!/bin/bash

# auther: Jason Yin

# 创建密钥对

ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa -q

# 声明你服务器密码,建议所有节点的密码均一致,否则该脚本需要再次进行优化

export mypasswd=1

# 定义主机列表

k8s_host_list=(node-ex01 node-ex02 node-ex03)

# 配置免密登录,利用expect工具免交互输入

for i in ${k8s_host_list[@]};do

expect -c "

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub root@$i

expect {

\"*yes/no*\" {send \"yes\r\"; exp_continue}

\"*password*\" {send \"$mypasswd\r\"; exp_continue}

}"

done

EOF

[root@node-exporter41 ~]# bash password_free_login.sh

2.3 编写同步脚本

[root@node-exporter41 ~]# cat > /usr/local/sbin/data_rsync.sh <<'EOF'

#!/bin/bash

# Auther: Jason Yin

if [ $# -lt 1 ];then

echo "Usage: $0 /path/to/file(绝对路径) [mode: m|w]"

exit

fi

if [ ! -e $1 ];then

echo "[ $1 ] dir or file not find!"

exit

fi

fullpath=`dirname $1`

basename=`basename $1`

cd $fullpath

case $2 in

WORKER_NODE|w)

K8S_NODE=(node-ex01 node-ex02 node-ex03)

;;

MASTER_NODE|m)

K8S_NODE=(node-ex01 node-ex02 node-ex03)

;;

*)

K8S_NODE=(node-ex01 node-ex02 node-ex03)

;;

esac

for host in ${K8S_NODE[@]};do

tput setaf 2

echo ===== rsyncing ${host}: $basename =====

tput setaf 7

rsync -az $basename `whoami`@${host}:$fullpath

if [ $? -eq 0 ];then

echo "命令执行成功!"

fi

done

EOF

[root@node-exporter41 ~]# chmod +x /usr/local/sbin/data_rsync.sh

[root@node-exporter41 ~]#

2.4 同步"/etc/hosts"文件到集群

[root@node-exporter41 ~]# data_rsync.sh /etc/hosts

3.所有节点Linux基础环境优化

3.1 所有节点关闭NetworkManager,ufw

systemctl disable --now NetworkManager ufw

3.2 所有节点关闭swap分区,fstab注释swap

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

free -h

3.3 所有节点手动同步时区和时间

ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

3.4 所有节点配置limit

cat >> /etc/security/limits.conf <<'EOF'

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

3.5.所有节点优化sshd服务

sed -i 's@#UseDNS yes@UseDNS no@g' /etc/ssh/sshd_config

sed -i 's@^GSSAPIAuthentication yes@GSSAPIAuthentication no@g' /etc/ssh/sshd_config

- UseDNS选项:

打开状态下,当客户端试图登录SSH服务器时,服务器端先根据客户端的IP地址进行DNS PTR反向查询出客户端的主机名,然后根据查询出的客户端主机名进行DNS正向A记录查询,验证与其原始IP地址是否一致,这是防止客户端欺骗的一种措施,但一般我们的是动态IP不会有PTR记录,打开这个选项不过是在白白浪费时间而已,不如将其关闭。- GSSAPIAuthentication:

当这个参数开启( GSSAPIAuthentication yes )的时候,通过SSH登陆服务器时候会有些会很慢!这是由于服务器端启用了GSSAPI。登陆的时候客户端需要对服务器端的IP地址进行反解析,如果服务器的IP地址没有配置PTR记录,那么就容易在这里卡住了。

3.6 Linux内核调优

cat > /etc/sysctl.d/k8s.conf <<'EOF'

# 以下3个参数是containerd所依赖的内核参数

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv6.conf.all.disable_ipv6 = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

3.7 修改终端颜色[可选]

cat <<EOF >> ~/.bashrc

PS1='[\[\e[34;1m\]\u@\[\e[0m\]\[\e[32;1m\]\H\[\e[0m\]\[\e[31;1m\] \W\[\e[0m\]]# '

EOF

source ~/.bashrc

4.所有节点安装ipvsadm以实现kube-proxy的负载均衡

4.1 所有安装ipvsadm等相关工具

apt -y install ipvsadm ipset sysstat conntrack

4.2 所有节点创建要开机自动加载的模块配置文件

cat > /etc/modules-load.d/ipvs.conf << 'EOF'

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

4.3 关机打快照

init 0

4.4 验证加载的模块

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

uname -r

ifconfig

温馨提示:

Linux kernel 4.19+版本已经将之前的"nf_conntrack_ipv4"模块更名为"nf_conntrack"模块哟~

5.脚本一键部署containerd环境

5.1在线安装containerd

https://www.cnblogs.com/yinzhengjie/p/18265994#二安装containerd组件

5.2.验证containerd的版本

[root@node-exporter41 ~]# ctr version

Client:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

Go version: go1.22.7

Server:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

UUID: 32018b7d-979c-4936-ad14-ec51de4c63b4

[root@node-ex01 ~]#

6.安装K8S程序

1.下载软件包

[root@node-ex01 ~]# wget https://dl.k8s.io/v1.31.3/kubernetes-server-linux-amd64.tar.gz

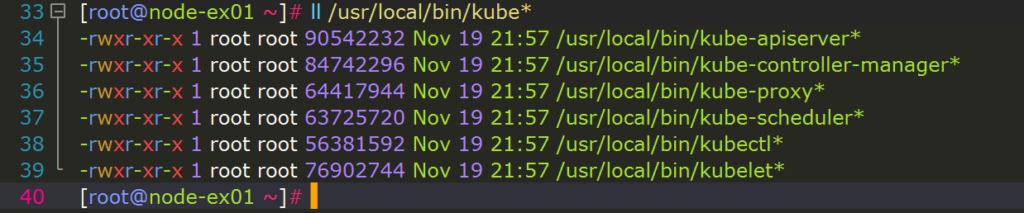

2. 解压K8S的二进制程序包到PATH环境变量路径

[root@node-ex01 ~]# tar -xf kubernetes-server-linux-amd64-v1.31.3.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

[root@node-ex01 ~]# ll /usr/local/bin/kube*

-rwxr-xr-x 1 root root 90542232 Nov 19 21:57 /usr/local/bin/kube-apiserver*

-rwxr-xr-x 1 root root 84742296 Nov 19 21:57 /usr/local/bin/kube-controller-manager*

-rwxr-xr-x 1 root root 56381592 Nov 19 21:57 /usr/local/bin/kubectl*

-rwxr-xr-x 1 root root 76902744 Nov 19 21:57 /usr/local/bin/kubelet*

-rwxr-xr-x 1 root root 64417944 Nov 19 21:57 /usr/local/bin/kube-proxy*

-rwxr-xr-x 1 root root 63725720 Nov 19 21:57 /usr/local/bin/kube-scheduler*

[root@node-ex01 ~]#

3.查看k8s各组件的版本

[root@node-ex01 ~]# kubelet --version

Kubernetes v1.31.3

[root@node-ex01 ~]#

[root@node-ex01 ~]# kube-proxy --version

Kubernetes v1.31.3

[root@node-ex01 ~]#

[root@node-ex01 ~]# kube-apiserver --version

Kubernetes v1.31.3

[root@node-ex01 ~]#

[root@node-ex01 ~]# kube-scheduler --version

Kubernetes v1.31.3

[root@node-ex01 ~]#

[root@node-ex01 ~]# kube-controller-manager --version

Kubernetes v1.31.3

[root@node-ex01 ~]#

[root@node-ex01 ~]# kubectl version

Client Version: v1.31.3

Kustomize Version: v5.4.2

The connection to the server localhost:8080 was refused - did you specify the right host or port?

[root@node-ex01 ~]#

4.拷贝程序到其他节点

[root@node-exporter41 ~]# scp /usr/local/bin/kube* node-ex02:/usr/local/bin/

kube-apiserver 100% 86MB 97.3MB/s 00:00

kube-controller-manager 100% 81MB 122.1MB/s 00:00

kubectl 100% 54MB 136.7MB/s 00:00

kubelet 100% 73MB 236.8MB/s 00:00

kube-proxy 100% 61MB 112.7MB/s 00:00

kube-scheduler 100% 61MB 105.4MB/s 00:00

[root@node-ex01 ~]#

[root@node-exporter41 ~]# scp /usr/local/bin/kube* node-ex03:/usr/local/bin/

kube-apiserver 100% 86MB 113.2MB/s 00:00

kube-controller-manager 100% 81MB 122.8MB/s 00:00

kubectl 100% 54MB 139.2MB/s 00:00

kubelet 100% 73MB 235.2MB/s 00:00

kube-proxy 100% 61MB 105.7MB/s 00:00

kube-scheduler 100% 61MB 99.0MB/s 00:00

[root@node-ex01 ~]#

7.生成k8s组件相关证书

1 所有节点创建k8s证书存储目录

mkdir -pv /oldboyedu/certs/kubernetes/

2 node-exporter41节点生成kubernetes自建ca证书

2.1 生成证书的CSR文件: 证书签发请求文件,配置了一些域名,公司,单位

[root@node-ex01 ~]# mkdir /oldboyedu/certs/pki && cd /oldboyedu/certs/pki

[root@node-ex01 ~]#

[root@node-ex01 ~]# cat > k8s-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

2.2 生成kubernetes证书

[root@node-ex01 ~]# cfssl gencert -initca k8s-ca-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/k8s-ca

[root@node-ex01 ~]#

[root@node-ex01 ~]# ll /oldboyedu/certs/kubernetes/k8s-ca*

-rw-r--r-- 1 root root 1070 Dec 2 11:46 /oldboyedu/certs/kubernetes/k8s-ca.csr

-rw------- 1 root root 1679 Dec 2 11:46 /oldboyedu/certs/kubernetes/k8s-ca-key.pem

-rw-r--r-- 1 root root 1363 Dec 2 11:46 /oldboyedu/certs/kubernetes/k8s-ca.pem

[root@node-ex01 ~]#

3.node-exporter41节点基于自建ca证书颁发apiserver相关证书

3.1 生成k8s证书的有效期为100年

[root@node-ex01 ~]# cat > k8s-ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

3.2 生成apiserver证书的CSR文件: 证书签发请求文件,配置了一些域名,公司,单位

[root@node-ex01 ~]# cat > apiserver-csr.json <<EOF

{

"CN": "kube-apiserver",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

]

}

EOF

3.3 基于自建ca证书生成apiServer的证书文件

[root@node-ex01 ~]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/k8s-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

--hostname=10.200.0.1,10.0.0.240,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.oldboyedu,kubernetes.default.svc.oldboyedu.com,10.0.0.41,10.0.0.42,10.0.0.43 \

--profile=kubernetes \

apiserver-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/apiserver

[root@node-ex01 ~]# ll /oldboyedu/certs/kubernetes/apiserver*

-rw-r--r-- 1 root root 1293 Dec 2 11:49 /oldboyedu/certs/kubernetes/apiserver.csr

-rw------- 1 root root 1679 Dec 2 11:49 /oldboyedu/certs/kubernetes/apiserver-key.pem

-rw-r--r-- 1 root root 1688 Dec 2 11:49 /oldboyedu/certs/kubernetes/apiserver.pem

[root@node-ex01 pki]#

温馨提示:

"10.200.0.1"为咱们的svc网段的第一个地址,您需要根据自己的场景稍作修改。

"10.0.0.240"是负载均衡器的VIP地址。

"kubernetes,...,kubernetes.default.svc.oldboyedu.com"对应的是apiServer解析的A记录。

"10.0.0.41,10.0.0.42,10.0.0.43"对应的是K8S集群的IP地址。

4 生成第三方组件与apiServer通信的聚合证书

聚合证书的作用就是让第三方组件(比如metrics-server等)能够拿这个证书文件和apiServer进行通信。

4.1 生成聚合证书的用于自建ca的CSR文件

[root@node-ex01 pki]# cat > front-proxy-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

4.2 生成聚合证书的自建ca证书

[root@node-exporter41 pki]# cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/front-proxy-ca

[root@node-exporter41 pki]#

[root@node-exporter41 pki]# ll /oldboyedu/certs/kubernetes/front-proxy-ca*

-rw-r--r-- 1 root root 891 Dec 2 11:52 /oldboyedu/certs/kubernetes/front-proxy-ca.csr

-rw------- 1 root root 1675 Dec 2 11:52 /oldboyedu/certs/kubernetes/front-proxy-ca-key.pem

-rw-r--r-- 1 root root 1094 Dec 2 11:52 /oldboyedu/certs/kubernetes/front-proxy-ca.pem

[root@node-exporter41 pki]#

4.3 生成聚合证书的用于客户端的CSR文件

[root@node-ex01 pki]# cat > front-proxy-client-csr.json <<EOF

{

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

4.4 基于聚合证书的自建ca证书签发聚合证书的客户端证书

[root@node-ex01 pki]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/front-proxy-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/front-proxy-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

front-proxy-client-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/front-proxy-client

[root@node-exporter41 pki]#

[root@node-exporter41 pki]# ll /oldboyedu/certs/kubernetes/front-proxy-client*

-rw-r--r-- 1 root root 903 Dec 2 11:53 /oldboyedu/certs/kubernetes/front-proxy-client.csr

-rw------- 1 root root 1679 Dec 2 11:53 /oldboyedu/certs/kubernetes/front-proxy-client-key.pem

-rw-r--r-- 1 root root 1188 Dec 2 11:53 /oldboyedu/certs/kubernetes/front-proxy-client.pem

[root@node-exporter41 pki]#

5.生成controller-manager证书及kubeconfig文件

5.1 生成controller-manager的CSR文件

[root@node-ex01 pki]# cat > controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes-manual"

}

]

}

EOF

5.2 生成controller-manager证书文件

[root@node-ex01 pki]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/k8s-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

controller-manager-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/controller-manager

[root@node-exporter41 pki]# ll /oldboyedu/certs/kubernetes/controller-manager*

-rw-r--r-- 1 root root 1082 Dec 2 11:55 /oldboyedu/certs/kubernetes/controller-manager.csr

-rw------- 1 root root 1675 Dec 2 11:55 /oldboyedu/certs/kubernetes/controller-manager-key.pem

-rw-r--r-- 1 root root 1501 Dec 2 11:55 /oldboyedu/certs/kubernetes/controller-manager.pem

[root@node-exporter41 pki]#

5.3 创建一个kubeconfig目录

[root@node-ex01 pki]# mkdir -pv /oldboyedu/certs/kubeconfig

5.4 设置一个集群

[root@node-ex01 pki]# kubectl config set-cluster yinzhengjie-k8s \

--certificate-authority=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-controller-manager.kubeconfig

5.5 设置一个用户项

[root@node-ex01 pki]# kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/oldboyedu/certs/kubernetes/controller-manager.pem \

--client-key=/oldboyedu/certs/kubernetes/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-controller-manager.kubeconfig

5.6 设置一个上下文环境

[root@node-ex01 pki]# kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=yinzhengjie-k8s \

--user=system:kube-controller-manager \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-controller-manager.kubeconfig

5.7 使用默认的上下文

[root@node-ex01 pki]# kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-controller-manager.kubeconfig

6.生成scheduler证书及kubeconfig文件

6.1 生成scheduler的CSR文件

[root@node-ex01 pki]# cat > scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes-manual"

}

]

}

EOF

6.2 生成scheduler证书文件

[root@node-ex01 pki]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/k8s-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/scheduler

[root@node-ex01 pki]# ll /oldboyedu/certs/kubernetes/scheduler*

-rw-r--r-- 1 root root 1058 Dec 2 12:02 /oldboyedu/certs/kubernetes/scheduler.csr

-rw------- 1 root root 1675 Dec 2 12:02 /oldboyedu/certs/kubernetes/scheduler-key.pem

-rw-r--r-- 1 root root 1476 Dec 2 12:02 /oldboyedu/certs/kubernetes/scheduler.pem

[root@node-ex01 pki]#

6.3.设置一个集群

[root@node-ex01 pki]# kubectl config set-cluster yinzhengjie-k8s \

--certificate-authority=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-scheduler.kubeconfig

6.4 设置一个用户项

[root@node-ex01 pki]# kubectl config set-credentials system:kube-scheduler \

--client-certificate=/oldboyedu/certs/kubernetes/scheduler.pem \

--client-key=/oldboyedu/certs/kubernetes/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-scheduler.kubeconfig

6.5.设置一个上下文环境

[root@node-ex01 pki]# kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=yinzhengjie-k8s \

--user=system:kube-scheduler \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-scheduler.kubeconfig

6.6 使用默认的上下文

[root@node-ex01 pki]# kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-scheduler.kubeconfig

7.生成k8s集群管理员证书及kubeconfig文件

7.1 生成管理员的CSR文件

[root@node-ex01 pki]# cat > admin-csr.json <<EOF

{

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes-manual"

}

]

}

EOF

7.2 生成k8s集群管理员证书

[root@node-ex01 pki]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/k8s-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/admin

[root@node-ex01 pki]# ll /oldboyedu/certs/kubernetes/admin*

-rw-r--r-- 1 root root 1025 Dec 2 12:07 /oldboyedu/certs/kubernetes/admin.csr

-rw------- 1 root root 1679 Dec 2 12:07 /oldboyedu/certs/kubernetes/admin-key.pem

-rw-r--r-- 1 root root 1444 Dec 2 12:07 /oldboyedu/certs/kubernetes/admin.pem

[root@node-exporter41 pki]#

7.3 设置一个集群

[root@node-ex01 pki]# kubectl config set-cluster yinzhengjie-k8s \

--certificate-authority=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

7.4 设置一个用户项

[root@node-ex01 pki]# kubectl config set-credentials kube-admin \

--client-certificate=/oldboyedu/certs/kubernetes/admin.pem \

--client-key=/oldboyedu/certs/kubernetes/admin-key.pem \

--embed-certs=true \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

7.5 设置一个上下文环境

[root@node-ex01 pki]# # kubectl config set-context kube-admin@kubernetes \

--cluster=yinzhengjie-k8s \

--user=kube-admin \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

7.6 使用默认的上下文

[root@node-ex01 pki]# kubectl config use-context kube-admin@kubernetes \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

8.创建ServiceAccount

8.1.ServiceAccount是k8s一种认证方式,创建ServiceAccount的时候会创建一个与之绑定的secret,这个secret会生成一个token

[root@node-ex01 pki]# openssl genrsa -out /oldboyedu/certs/kubernetes/sa.key 2048

8.2 基于sa.key创建sa.pub

[root@node-ex01 pki]# openssl rsa -in /oldboyedu/certs/kubernetes/sa.key -pubout -out /oldboyedu/certs/kubernetes/sa.pub

[root@node-ex01 pki]#

[root@node-ex01 pki]# ll /oldboyedu/certs/kubernetes/sa*

-rw------- 1 root root 1704 Dec 2 12:10 /oldboyedu/certs/kubernetes/sa.key

-rw-r--r-- 1 root root 451 Dec 2 12:11 /oldboyedu/certs/kubernetes/sa.pub

[root@node-ex01 pki]#

9.node-ex01节点K8S组件证书拷贝到其他两个master节点

9.1 node-ex01节点将etcd证书拷贝到其他两个master节点

[root@node-ex01 pki]# scp /oldboyedu/certs/kubernetes/* 10.0.0.42:/oldboyedu/certs/kubernetes/

[root@node-ex01 pki]# scp /oldboyedu/certs/kubernetes/* 10.0.0.43:/oldboyedu/certs/kubernetes/

[root@node-ex01 pki]# scp -r /oldboyedu/certs/kubeconfig/ 10.0.0.42:/oldboyedu/certs/

[root@node-ex01 pki]# scp -r /oldboyedu/certs/kubeconfig/ 10.0.0.43:/oldboyedu/certs/

9.2 其他两个节点验证文件数量是否正确

[root@node-ex02 ~]# ls -1 /oldboyedu/certs/kubeconfig/ | wc -l

3

[root@node-ex02 ~]#

[root@node-ex02 ~]# ls -1 /oldboyedu/certs/kubernetes | wc -l

23

[root@node-ex02 ~]#

[root@node-ex03 ~]# ls -1 /oldboyedu/certs/kubeconfig/ | wc -l

3

[root@node-ex03 ~]#

[root@node-ex03 ~]# ls -1 /oldboyedu/certs/kubernetes | wc -l

23

[root@node-ex03 ~]#

8.高可用组件haproxy+keepalived安装及验证

1 所有master【node-exporter[41-43]】节点安装高可用组件

温馨提示:

- 对于高可用组件,其实我们也可以单独找两台虚拟机来部署,但我为了节省2台机器,就直接在master节点复用了。

- 如果在云上安装K8S则无安装高可用组件了,毕竟公有云大部分都是不支持keepalived的,可以直接使用云产品,比如阿里的"SLB",腾讯的"ELB"等SAAS产品;

- 推荐使用ELB,SLB有回环的问题,也就是SLB代理的服务器不能反向访问SLB,但是腾讯云修复了这个问题;

具体实操:

apt-get -y install keepalived haproxy

2.所有master节点配置haproxy

温馨提示:

- haproxy的负载均衡器监听地址我配置是8443,你可以修改为其他端口,haproxy会用来反向代理各个master组件的地址;

- 如果你真的修改晴一定注意上面的证书配置的kubeconfig文件,也要一起修改,否则就会出现链接集群失败的问题;

推荐阅读:

https://www.haproxy.com/documentation/haproxy-configuration-manual/2-4r1/#2.1

具体实操:

2.1 备份配置文件

cp /etc/haproxy/haproxy.cfg{,`date +%F`}

2.2 所有节点的配置文件内容相同

cat > /etc/haproxy/haproxy.cfg <<'EOF'

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-haproxy

bind *:9999

mode http

option httplog

monitor-uri /ruok

frontend yinzhengjie-k8s

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend yinzhengjie-k8s

backend yinzhengjie-k8s

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server node-ex01 10.0.0.65:6443 check

server node-ex02 10.0.0.66:6443 check

server node-ex03 10.0.0.67:6443 check

EOF

3.所有master节点配置keepalived

温馨提示:

- 注意"interface"字段为你的物理网卡的名称,如果你的网卡是ens33,请将"eth0"修改为"ens33"哟;

- 注意"mcast_src_ip"各master节点的配置均不相同,修改根据实际环境进行修改哟;

- 注意"virtual_ipaddress"指定的是负载均衡器的VIP地址,这个地址也要和kubeconfig文件的Apiserver地址要一致哟;

- 注意"script"字段的脚本用于检测后端的apiServer是否健康;

- 注意"router_id"字段为节点ip,master每个节点配置自己的IP

具体实操:

3.1."node-ex01"节点创建配置文件

[root@node-ex01 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.41 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe2b:962d prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:2b:96:2d txqueuelen 1000 (Ethernet)

RX packets 1021401 bytes 702381346 (702.3 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 1230403 bytes 1033531227 (1.0 GB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...

[root@node-ex01 ~]#

[root@node-ex01 ~]# cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id 10.0.0.41

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.41

nopreempt

authentication {

auth_type PASS

auth_pass yinzhengjie_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

3.2 "node-ex02"节点创建配置文件

[root@node-ex02 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.42 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe3d:28b5 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:3d:28:b5 txqueuelen 1000 (Ethernet)

RX packets 1318000 bytes 756967281 (756.9 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 917280 bytes 114644848 (114.6 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...

[root@node-ex02 ~]#

[root@node-ex02 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.42

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.42

nopreempt

authentication {

auth_type PASS

auth_pass yinzhengjie_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

3.3 "k8s-master03"节点创建配置文件

[root@node-ex03 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 10.0.0.43 netmask 255.255.255.0 broadcast 10.0.0.255

inet6 fe80::20c:29ff:fe0c:3a61 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:0c:3a:61 txqueuelen 1000 (Ethernet)

RX packets 1005427 bytes 725368047 (725.3 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 592811 bytes 82908201 (82.9 MB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...

[root@node-ex03 ~]#

[root@node-ex03 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.43

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.43

nopreempt

authentication {

auth_type PASS

auth_pass yinzhengjie_k8s

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

3.4 所有keepalived节点均需要创建健康检查脚本

cat > /etc/keepalived/check_port.sh <<'EOF'

#!/bin/bash

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

systemctl stop keepalived

fi

else

echo "Check Port Cant Be Empty!"

fi

EOF

chmod +x /etc/keepalived/check_port.sh

4.验证haproxy服务并验证

4.1 所有节点启动haproxy服务

systemctl enable --now haproxy

systemctl restart haproxy

systemctl status haproxy

4.2 所有节点启动keepalived

systemctl enable --now keepalived

systemctl start keepalived

4.3 验证VIP所在节点

[root@node-ex01 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:2b:96:2d brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.41/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.0.0.240/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe2b:962d/64 scope link

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

[root@node-ex01 ~]#

[root@node-ex02 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:3d:28:b5 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.42/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe3d:28b5/64 scope link

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

[root@node-ex02 ~]#

[root@node-ex03 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:0c:3a:61 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.43/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe0c:3a61/64 scope link

valid_lft forever preferred_lft forever

3: tunl0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN group default qlen 1000

link/ipip 0.0.0.0 brd 0.0.0.0

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:a0:03:71:c0 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

[root@node-ex03 ~]#

4.4 基于telnet验证haporxy是否正常

[root@node-ex03 ~]# telnet 10.0.0.240 8443

Trying 10.0.0.240...

Connected to 10.0.0.240.

Escape character is '^]'.

Connection closed by foreign host.

[root@node-exporter43 ~]#

4.5 基于webUI进行验证

[root@node-ex03 ~]# curl http://10.0.0.240:9999/ruok

<html><body><h1>200 OK</h1>

Service ready.

</body></html>

[root@node-ex03 ~]#

5.验证keepalived服务是否飘逸

5.1 验证服务是否正常

[root@node-ex03 ~]# ping 10.0.0.240

PING 10.0.0.240 (10.0.0.240) 56(84) bytes of data.

64 bytes from 10.0.0.240: icmp_seq=1 ttl=64 time=0.146 ms

64 bytes from 10.0.0.240: icmp_seq=2 ttl=64 time=0.164 ms

64 bytes from 10.0.0.240: icmp_seq=3 ttl=64 time=0.716 ms

...

5.2 停止haproxy服务观察vip飘逸情况

[root@node-ex01 ~]# ss -ntl | grep 8443

LISTEN 0 2000 127.0.0.1:8443 0.0.0.0:*

LISTEN 0 2000 0.0.0.0:8443 0.0.0.0:*

[root@node-exporter41 ~]#

[root@node-exporter41 ~]# systemctl stop haproxy.service

[root@node-exporter41 ~]#

[root@node-exporter41 ~]# ss -ntl | grep 8443

[root@node-exporter41 ~]#

[root@node-ex01 ~]# ip a

...

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:2b:96:2d brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.41/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe2b:962d/64 scope link

valid_lft forever preferred_lft forever

[root@node-ex01 ~]#

[root@node-ex02 ~]# ip a

...

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:3d:28:b5 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.42/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe3d:28b5/64 scope link

valid_lft forever preferred_lft forever

[root@node-ex02 ~]#

[root@node-ex03 ~]# ip a

...

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:0c:3a:61 brd ff:ff:ff:ff:ff:ff

altname enp2s1

altname ens33

inet 10.0.0.43/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.0.0.240/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe0c:3a61/64 scope link

valid_lft forever preferred_lft forever

[root@node-ex03 ~]#

5.3 再次观察终端输出(切换时明会有提示!Redirect Host)

[root@node-ex03 ~]# ping 10.0.0.240

PING 10.0.0.240 (10.0.0.240) 56(84) bytes of data.

64 bytes from 10.0.0.240: icmp_seq=1 ttl=64 time=0.146 ms

64 bytes from 10.0.0.240: icmp_seq=2 ttl=64 time=0.164 ms

64 bytes from 10.0.0.240: icmp_seq=3 ttl=64 time=0.716 ms

...

64 bytes from 10.0.0.240: icmp_seq=66 ttl=64 time=0.163 ms

64 bytes from 10.0.0.240: icmp_seq=67 ttl=64 time=0.201 ms

From 10.0.0.41 icmp_seq=68 Redirect Host(New nexthop: 10.0.0.240)

64 bytes from 10.0.0.240: icmp_seq=69 ttl=64 time=0.056 ms

9.启动kube-apiserver组件

1 node-ex01节点启动ApiServer

温馨提示:

- "--advertise-address"是对应的master节点的IP地址;

- "--service-cluster-ip-range"对应的是svc的网段

- "--service-node-port-range"对应的是svc的NodePort端口范围;

- "--etcd-servers"指定的是etcd集群地址

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-apiserver/

具体实操:

1.1 创建node-ex01节点的启动脚本配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=Jason Yin's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.41 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.41:2379,https://10.0.0.42:2379,https://10.0.0.43:2379 \

--etcd-cafile=/oldboyedu/certs/etcd/etcd-ca.pem \

--etcd-certfile=/oldboyedu/certs/etcd/etcd-server.pem \

--etcd-keyfile=/oldboyedu/certs/etcd/etcd-server-key.pem \

--client-ca-file=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/oldboyedu/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/oldboyedu/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/oldboyedu/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/oldboyedu/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.oldboyedu.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/oldboyedu/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/oldboyedu/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/oldboyedu/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

1.2 启动服务

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

q

ss -ntl | grep 6443

2 node-ex02节点启动ApiServer

温馨提示:

- "--advertise-address"是对应的master节点的IP地址;

- "--service-cluster-ip-range"对应的是svc的网段

- "--service-node-port-range"对应的是svc的NodePort端口范围;

- "--etcd-servers"指定的是etcd集群地址

具体实操:

2.1 创建node-ex02节点的配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=Jason Yin's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.42 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.41:2379,https://10.0.0.42:2379,https://10.0.0.43:2379 \

--etcd-cafile=/data/certs/etcd/etcd-ca.pem \

--etcd-certfile=/data/certs/etcd/etcd-server.pem \

--etcd-keyfile=/data/certs/etcd/etcd-server-key.pem \

--client-ca-file=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/oldboyedu/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/oldboyedu/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/oldboyedu/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/oldboyedu/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.oldboyedu.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/oldboyedu/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/oldboyedu/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/oldboyedu/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

2.2 启动服务

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

ss -ntl | grep 6443

3 node-ex03节点启动ApiServer

温馨提示:

- "--advertise-address"是对应的master节点的IP地址;

- "--service-cluster-ip-range"对应的是svc的网段

- "--service-node-port-range"对应的是svc的NodePort端口范围;

- "--etcd-servers"指定的是etcd集群地址

具体实操:

1 创建node-ex03节点的配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=Jason Yin's Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.43 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.41:2379,https://10.0.0.42:2379,https://10.0.0.43:2379 \

--etcd-cafile=/oldboyedu/certs/etcd/etcd-ca.pem \

--etcd-certfile=/oldboyedu/certs/etcd/etcd-server.pem \

--etcd-keyfile=/oldboyedu/certs/etcd/etcd-server-key.pem \

--client-ca-file=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--tls-cert-file=/oldboyedu/certs/kubernetes/apiserver.pem \

--tls-private-key-file=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--kubelet-client-certificate=/oldboyedu/certs/kubernetes/apiserver.pem \

--kubelet-client-key=/oldboyedu/certs/kubernetes/apiserver-key.pem \

--service-account-key-file=/oldboyedu/certs/kubernetes/sa.pub \

--service-account-signing-key-file=/oldboyedu/certs/kubernetes/sa.key \

--service-account-issuer=https://kubernetes.default.svc.oldboyedu.com \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/oldboyedu/certs/kubernetes/front-proxy-ca.pem \

--proxy-client-cert-file=/oldboyedu/certs/kubernetes/front-proxy-client.pem \

--proxy-client-key-file=/oldboyedu/certs/kubernetes/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

2 启动服务

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

ss -ntl | grep 6443

10.部署ControlerManager组件

1 所有节点创建配置文件

温馨提示:

- "--cluster-cidr"是Pod的网段地址,我们可以自行修改。

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-controller-manager/

所有节点的controller-manager组件配置文件相同: (前提是证书文件存放的位置也要相同哟!)

cat > /usr/lib/systemd/system/kube-controller-manager.service << 'EOF'

[Unit]

Description=Jason Yin's Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--root-ca-file=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--cluster-signing-cert-file=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--cluster-signing-key-file=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

--service-account-private-key-file=/oldboyedu/certs/kubernetes/sa.key \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-controller-manager.kubeconfig \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=10.100.0.0/16 \

--requestheader-client-ca-file=/oldboyedu/certs/kubernetes/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2.启动controller-manager服务

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

q

ss -ntl | grep 10257

11.部署Scheduler组件

1 所有节点创建配置文件

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-scheduler/

所有节点的controller-manager组件配置文件相同: (前提是证书文件存放的位置也要相同哟!)

cat > /usr/lib/systemd/system/kube-scheduler.service <<'EOF'

[Unit]

Description=Jason Yin's Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--leader-elect=true \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2 启动scheduler服务

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

q

ss -ntl | grep 10259

12.三种方式配置K8S管理节点信息及验证master组件是否正常

1.基于--kubeconfig方式访问【每次执行命令都需要指定路径】

[root@node-ex01 ~]# kubectl get cs --kubeconfig=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy ok

[root@node-ex01 ~]#

2.基于KUBECONFIG变量方式访问【临时生效,重启系统或者删除KUBECONFIG变量则无效】

[root@node-ex02 ~]# export KUBECONFIG=/oldboyedu/certs/kubeconfig/kube-admin.kubeconfig

[root@node-ex02 ~]#

[root@node-ex02 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy ok

[root@node-ex02 ~]#

3.存放在默认的路径【推荐】

[root@node-ex03 ~]# mkdir -pv ~/.kube

[root@node-ex03 ~]# cp /oldboyedu/certs/kubeconfig/kube-admin.kubeconfig ~/.kube/config

[root@node-ex03 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy ok

[root@node-ex03 ~]#

12.创建Bootstrapping自动颁发kubelet证书配置

1.node-exporter41节点创建bootstrap-kubelet.kubeconfig文件

温馨提示:

- "--server"只是负载均衡器的IP地址,由负载均衡器对master节点进行反向代理哟。

- "--token"也可以自定义,但也要同时修改"bootstrap"的Secret的"token-id"和"token-secret"对应值哟;

1.1 设置集群

[root@node-ex01 ~]# kubectl config set-cluster yinzhengjie-k8s \

--certificate-authority=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/oldboyedu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

1.2 创建用户

[root@node-ex01 ~]# kubectl config set-credentials tls-bootstrap-token-user \

--token=oldboy.jasonyinzhengjie \

--kubeconfig=/oldboyedu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

1.3 将集群和用户进行绑定

[root@node-ex01 ~]# kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=yinzhengjie-k8s \

--user=tls-bootstrap-token-user \

--kubeconfig=/oldboyedu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

1.4.配置默认的上下文

[root@node-ex01 ~]# kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/oldboyedu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

2 创建bootstrap-secret授权

2.1 创建配bootstrap-secret文件用于授权

[root@node-ex01 ~]# cat > bootstrap-secret.yaml <<EOF

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token-oldboy

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: oldboy

token-secret: jasonyinzhengjie

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

EOF

2.应用bootstrap-secret配置文件

[root@node-ex01 ~]# kubectl apply -f bootstrap-secret.yaml

secret/bootstrap-token-oldboy configured

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap unchanged

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-bootstrap unchanged

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-certificate-rotation unchanged

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created

[root@node-exporter41 ~]#

13.部署worker节点之kubelet启动实战

1 复制证书

1.1 node-ex01节点分发证书到其他节点

cd /oldboyedu/certs/

for NODE in node-exporter42 node-exporter43; do

scp kubeconfig/bootstrap-kubelet.kubeconfig $NODE:/oldboyedu/certs/kubeconfig/

done

1.2 worker节点进行验证

[root@node-ex01 certs]# ll /oldboyedu/certs/kubeconfig

total 36

drwxr-xr-x 2 root root 4096 Dec 2 16:12 ./

drwxr-xr-x 6 root root 4096 Dec 2 11:55 ../

-rw------- 1 root root 2243 Dec 2 16:12 bootstrap-kubelet.kubeconfig

-rw------- 1 root root 6372 Dec 2 12:09 kube-admin.kubeconfig

-rw------- 1 root root 6524 Dec 2 12:00 kube-controller-manager.kubeconfig

-rw------- 1 root root 6452 Dec 2 12:05 kube-scheduler.kubeconfig

[root@node-ex01 certs]#

[root@node-ex02 ~]# ll /oldboyedu/certs/kubeconfig

total 36

drwxr-xr-x 2 root root 4096 Dec 2 16:23 ./

drwxr-xr-x 5 root root 4096 Dec 2 12:15 ../

-rw------- 1 root root 2243 Dec 2 16:23 bootstrap-kubelet.kubeconfig

-rw------- 1 root root 6372 Dec 2 12:15 kube-admin.kubeconfig

-rw------- 1 root root 6524 Dec 2 12:15 kube-controller-manager.kubeconfig

-rw------- 1 root root 6452 Dec 2 12:15 kube-scheduler.kubeconfig

[root@node-ex02 ~]#

[root@node-ex03 ~]# ll /oldboyedu/certs/kubeconfig

total 36

drwxr-xr-x 2 root root 4096 Dec 2 16:23 ./

drwxr-xr-x 6 root root 4096 Dec 2 12:15 ../

-rw------- 1 root root 2243 Dec 2 16:23 bootstrap-kubelet.kubeconfig

-rw------- 1 root root 6372 Dec 2 12:15 kube-admin.kubeconfig

-rw------- 1 root root 6524 Dec 2 12:15 kube-controller-manager.kubeconfig

-rw------- 1 root root 6452 Dec 2 12:15 kube-scheduler.kubeconfig

[root@node-ex03 ~]#

2 启动kubelet服务

温馨提示:

- 在"10-kubelet.con"文件中使用"--kubeconfig"指定的"kubelet.kubeconfig"文件并不存在,这个证书文件后期会自动生成;

- 对于"clusterDNS"是NDS地址,我们可以自定义,比如"10.200.0.254";

- “clusterDomain”对应的是域名信息,要和我们设计的集群保持一致,比如"oldboyedu.com";

- "10-kubelet.conf"文件中的"ExecStart="需要写2次,否则可能无法启动kubelet;

具体实操:

2.1 所有节点创建工作目录

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

2.2 所有节点创建kubelet的配置文件

cat > /etc/kubernetes/kubelet-conf.yml <<'EOF'

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /oldboyedu/certs/kubernetes/k8s-ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.200.0.254

clusterDomain: oldboyedu.com

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 888

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF

2.3 所有节点配置kubelet service

cat > /usr/lib/systemd/system/kubelet.service <<'EOF'

[Unit]

Description=JasonYin's Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

2.4 所有节点配置kubelet service的配置文件

cat > /etc/systemd/system/kubelet.service.d/10-kubelet.conf <<'EOF'

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/oldboyedu/certs/kubeconfig/bootstrap-kubelet.kubeconfig --kubeconfig=/oldboyedu/certs/kubeconfig/kubelet.kubeconfig"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

EOF

2.5 启动所有节点kubelet

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

2.6 在所有master节点上查看nodes信息。

[root@node-ex01 ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node-exporter41 NotReady <none> 4m7s v1.31.3 10.0.0.41 <none> Ubuntu 22.04.4 LTS 5.15.0-125-generic containerd://1.6.36

node-exporter42 NotReady <none> 3m57s v1.31.3 10.0.0.42 <none> Ubuntu 22.04.4 LTS 5.15.0-125-generic containerd://1.6.36

node-exporter43 NotReady <none> 3m49s v1.31.3 10.0.0.43 <none> Ubuntu 22.04.4 LTS 5.15.0-125-generic containerd://1.6.36

[root@node-exporter41 ~]#

2.7 可以查看到有相应的csr用户客户端的证书请求

[root@node-ex01 ~]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

csr-25hxc 4m20s kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:oldboy <none> Approved,Issued

csr-8c6dd 4m10s kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:oldboy <none> Approved,Issued

csr-z959q 4m2s kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:oldboy <none> Approved,Issued

[root@node-ex01 ~]#

14.部署worker节点之kube-proxy服务

1.生成kube-proxy的csr文件

[root@node-ex41 ~]# cd /oldboyedu/certs/pki/

[root@node-ex41 pki]# cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-proxy",

"OU": "Kubernetes-manual"

}

]

}

EOF

2.创建kube-proxy需要的证书文件

[root@node-ex41 pki]# cfssl gencert \

-ca=/oldboyedu/certs/kubernetes/k8s-ca.pem \

-ca-key=/oldboyedu/certs/kubernetes/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare /oldboyedu/certs/kubernetes/kube-proxy

[root@node-ex41 pki]# ll /oldboyedu/certs/kubernetes/kube-proxy*

-rw-r--r-- 1 root root 1045 Dec 2 16:38 /oldboyedu/certs/kubernetes/kube-proxy.csr

-rw------- 1 root root 1679 Dec 2 16:38 /oldboyedu/certs/kubernetes/kube-proxy-key.pem

-rw-r--r-- 1 root root 1464 Dec 2 16:38 /oldboyedu/certs/kubernetes/kube-proxy.pem

[root@node-exporter41 pki]#

3.设置集群

[root@node-ex41 pki]# kubectl config set-cluster yinzhengjie-k8s \

--certificate-authority=/oldboyedu/certs/kubernetes/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig

4.设置一个用户项

[root@node-ex41 pki]# kubectl config set-credentials system:kube-proxy \

--client-certificate=/oldboyedu/certs/kubernetes/kube-proxy.pem \

--client-key=/oldboyedu/certs/kubernetes/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig

5.设置一个上下文环境

[root@node-ex41 pki]# kubectl config set-context kube-proxy@kubernetes \

--cluster=yinzhengjie-k8s \

--user=system:kube-proxy \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig

6.使用默认的上下文

[root@node-ex41 pki]# kubectl config use-context kube-proxy@kubernetes \

--kubeconfig=/oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig

7.将kube-proxy的kubeconfig文件发送到其他节点

for NODE in node-ex02 node-ex03; do

echo $NODE

scp /oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig $NODE:/oldboyedu/certs/kubeconfig/

done

8.所有节点创建kube-proxy.conf配置文件

cat > /etc/kubernetes/kube-proxy.yml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

bindAddress: 0.0.0.0

metricsBindAddress: 127.0.0.1:10249

clientConnection:

acceptConnection: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /oldboyedu/certs/kubeconfig/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 10.100.0.0/16

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

mode: "ipvs"

nodeProtAddress: null

oomScoreAdj: -999

portRange: ""

udpIdelTimeout: 250ms

EOF

9.所有节点使用systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Jason Yin's Kubernetes Proxy

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yml \

--v=2

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

10.所有节点启动kube-proxy

systemctl daemon-reload && systemctl enable --now kube-proxy

systemctl status kube-proxy

q

ss -ntl |grep 10249

15.Calico网络插件对应K8S的版本说明

K8s 1.23.17推荐使用Calico 3.25-版本。

K8S 1.31.3推荐使用Calico 3.29+版本。

推荐阅读:

https://archive-os-3-25.netlify.app/calico/3.25/getting-started/kubernetes/requirements#kubernetes-requirements

https://docs.tigera.io/calico/latest/getting-started/kubernetes/requirements#kubernetes-requirements

- K8S使用Calico的CNI网络插件

1.下载资源清单

[root@node-ex01 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.29.1/manifests/tigera-operator.yaml

2.安装Tigera Calico操作符和自定义资源定义

[root@node-ex01 ~]# kubectl create -f tigera-operator.yaml

3.下载自定义资源清单

[root@node-ex01 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.29.1/manifests/custom-resources.yaml

4.修改自定义资源的Pod网段

[root@node-ex01 ~]# grep cidr custom-resources.yaml

cidr: 192.168.0.0/16

[root@node-ex01 ~]#

[root@node-ex01 ~]# sed -i '/cidr/s#192.168#10.100#' custom-resources.yaml

[root@node-ex01 ~]#

[root@node-ex01 ~]# grep cidr custom-resources.yaml

cidr: 10.100.0.0/16

[root@ex01 ~]#

5.创建资源

[root@node-exporter41 ~]# kubectl create -f custom-resources.yaml

6.检查Pod是否部署成功

[root@node-ex01 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

calico-apiserver calico-apiserver-6db8b74566-mcmt7 1/1 Running 0 51s

calico-apiserver calico-apiserver-6db8b74566-qbbzk 1/1 Running 0 51s

calico-system calico-kube-controllers-59d844fb78-9mxsj 1/1 Running 0 34s

calico-system calico-node-5pmrf 1/1 Running 0 33s

calico-system calico-node-lzxmw 1/1 Running 0 33s

calico-system calico-node-xjk4p 1/1 Running 0 33s

calico-system calico-typha-6c6c97f598-bdbg8 1/1 Running 0 34s

calico-system calico-typha-6c6c97f598-l4c8m 1/1 Running 0 34s

calico-system csi-node-driver-7lxrn 2/2 Running 0 32s

calico-system csi-node-driver-8fjkn 2/2 Running 0 33s

calico-system csi-node-driver-x7dk7 2/2 Running 0 32s

tigera-operator tigera-operator-76c4976dd7-7rwhs 1/1 Running 0 40m

[root@node-ex01 ~]#

前提是有Linux系统可以翻墙的节点,可以docker pull拉取镜像

root@master231:~# docker pull docker.io/calico/apiserver:v3.29.1

root@master231:~# docker pull docker.io/calico/kube-controllers:v3.29.1

root@master231:~# docker pull docker.io/calico/pod2daemon-flexvol:v3.29.1

root@master231:~# docker pull docker.io/calico/cni:v3.29.1

root@master231:~# docker pull docker.io/calico/node:v3.29.1

root@master231:~# docker pull docker.io/calico/typha:v3.29.1

root@master231:softwares# cat calico-image.sh

#!/bin/bash

images=(

docker.io/calico/apiserver:v3.29.1

docker.io/calico/kube-controllers:v3.29.1

docker.io/calico/pod2daemon-flexvol:v3.29.1

docker.io/calico/cni:v3.29.1

docker.io/calico/node:v3.29.1

docker.io/calico/typha:v3.29.1

docker.io/calico/csi:v3.29.1

node-driver-registrar:v3.29.1)

for image in ${images[@]}

do

# echo "输出镜像:${image}"

image_and_version=`echo ${image}| awk -F '/' '{print $3}'`

# echo "输出镜像版本:${image_and_version}"

image_versions=(`echo ${image_and_version}| awk -F ':' '{print $1 " " $2}'`)

echo 镜像和版本数组:${image_versions[*]}

docker save ${image} > /oldboyedu/softwares/oldboyedu-${image_versions[0]}-${image_versions[1]}.tar.gz

done

root@master231:softwares scp oldboyedu-apiserver-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-cni-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-csi-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-kube-controllers-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwaresscp oldboyedu-node-driver-registrar-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-node-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-pod2daemon-flexvol-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:softwares scp oldboyedu-typha-v3.29.1.tar.gz 10.0.0.67:/oldboyedu/softwares

root@master231:~ cat > import-calico-v3.29.1.sh <<'EOF'

#!/bin/bash

IMAGES=(

oldboyedu-cni-v3.29.1.tar.gz

oldboyedu-csi-v3.29.1.tar.gz

oldboyedu-kube-controllers-v3.29.1.tar.gz

oldboyedu-node-driver-registrar-v3.29.1.tar.gz

oldboyedu-node-v3.29.1.tar.gz

oldboyedu-pod2daemon-flexvol-v3.29.1.tar.gz

oldboyedu-typha-v3.29.1.tar.gz

oldboyedu-apiserver-v3.29.1.tar.gz)

# echo ${IMAGES[*]}

for pkg in ${IMAGES[@]}

do

echo "importing image ---> ${URL}/${pkg}"

ctr -n k8s.io i import ${pkg}

done

EOF

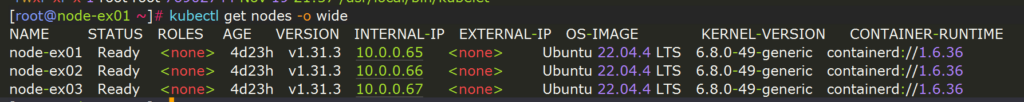

7.再次查看节点状态

[root@ex01 ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node-ex01 Ready <none> 4d23h v1.31.3 10.0.0.65 <none> Ubuntu 22.04.4 LTS 6.8.0-49-generic containerd://1.6.36

node-ex02 Ready <none> 4d23h v1.31.3 10.0.0.66 <none> Ubuntu 22.04.4 LTS 6.8.0-49-generic containerd://1.6.36

node-ex03 Ready <none> 4d23h v1.31.3 10.0.0.67 <none> Ubuntu 22.04.4 LTS 6.8.0-49-generic containerd://1.6.36

[root@node-exporter41 ~]#

推荐阅读:

https://docs.tigera.io/calico/latest/getting-started/kubernetes/quickstart

8.检查集群节点是否有污点

[root@node-ex01 ~]# kubectl describe nodes | grep Taints

Taints: <none>

Taints: <none>

Taints: <none>

[root@node-ex01 ~]#

16.测试集群是否正常

1.配置自动补全功能

kubectl completion bash > ~/.kube/completion.bash.inc

echo "source '$HOME/.kube/completion.bash.inc'" >> $HOME/.bashrc

source $HOME/.bashrc

2.启动deployment资源测试

cat > deploy-apps.yaml <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: yinzhengjie-app01

spec:

replicas: 1

selector:

matchLabels:

apps: v1

template:

metadata:

labels:

apps: v1

spec:

nodeName: node-exporter42

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v1

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: yinzhengjie-app02

spec:

replicas: 1

selector:

matchLabels:

apps: v1

template:

metadata:

labels:

apps: v1

spec:

nodeName: node-exporter43

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v2

EOF

3.测试验证

[root@node-ex01 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

yinzhengjie-app01-f5cd494c9-bzfvg 1/1 Running 0 21s 10.100.173.69 node-exporter42 <none> <none>

yinzhengjie-app02-5d77969f8f-q7m25 1/1 Running 0 21s 10.100.246.196 node-exporter43 <none> <none>

[root@node-ex01 ~]#

[root@node-ex01 ~]# curl 10.100.173.69

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8"/>

<title>yinzhengjie apps v1</title>

<style>

div img {

width: 900px;

height: 600px;

margin: 0;

}

</style>

</head>

<body>

<h1 style="color: green">凡人修仙传 v1 </h1>

<div>

<img src="1.jpg">

<div>

</body>

</html>

[root@node-exporter41 ~]#

[root@node-exporter41 ~]# curl 10.100.246.196

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8"/>

<title>yinzhengjie apps v2</title>

<style>

div img {

width: 900px;

height: 600px;

margin: 0;

}

</style>

</head>

<body>

<h1 style="color: red">凡人修仙传 v2 </h1>

<div>

<img src="2.jpg">

<div>

</body>

</html>

[root@node-ex01 ~]#

4.删除资源

kubectl delete -f deploy-apps.yaml

5.关机,拍快照

共有 0 条评论